The New York Yankees are heading into the 2024 season with a renewed sense...

In the 1940s, diving was a popular pastime, but it came with its own...

The Baseball Bar-B-Cast returns with a new episode after a relatively quiet Thursday in...

A recent study has shed light on the relationship between health equity and the...

The annual “ADNOC Pioneers” Forum recently took place with over 1,000 former and current...

As a business leader, travel is an essential aspect of growing and expanding your...

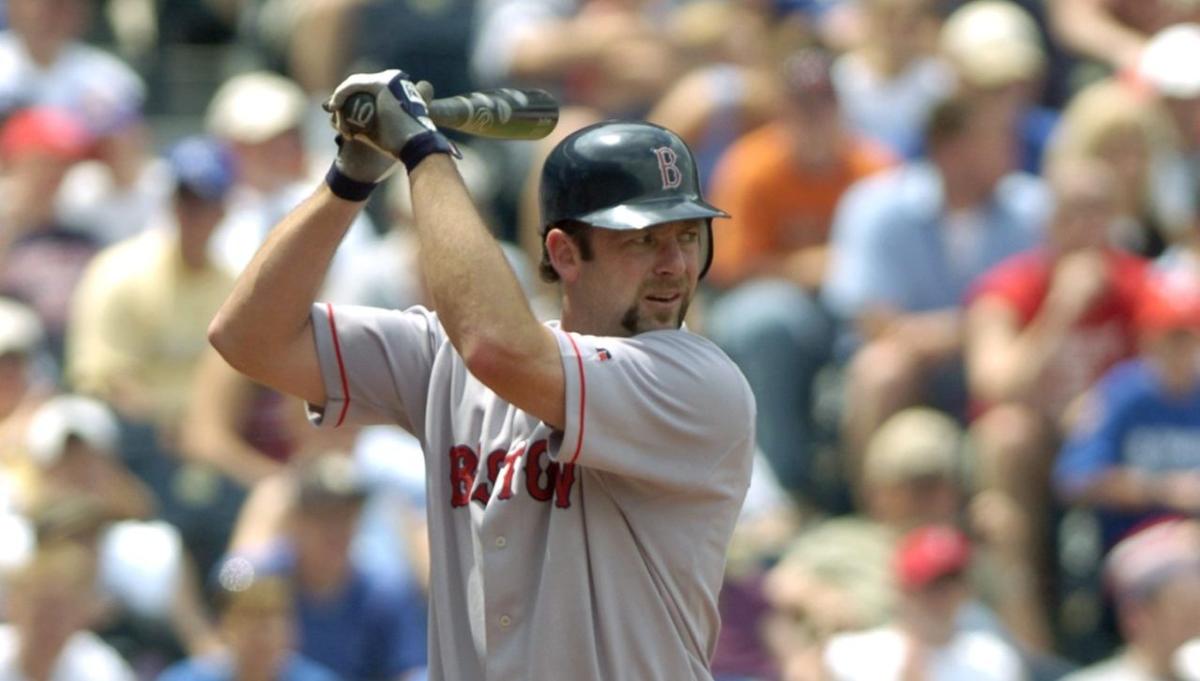

In 2004, the Boston Red Sox won their first World Series since 1918 with...

In recent years, Trend’s mobile application service has seen a decrease in blocked threats...

On April 19, 2024, the Chicago Bulls faced off against the Miami HEAT. The...

The Community Conversation Kit (CCK) is a valuable resource for leaders looking to engage...